On this page

- ANNOUNCEMENTS 🔊

- TOP PICKS 📑 🎧

- NEWS 🗞️

- The U.S. Senate Rejected a Moratorium That Would Block State-Level AI Regulations.

- The United States and the United Kingdom Have Redefined “AI Safety” Policy Through a National Security Lens.

- A Revolution from Google DeepMind: AlphaGenome Illuminates the “Dark Matter” of the Genome

- The “ChatGPT Moment” in Robotics: “World Models” That Understand the Physical World Are Being Developed

ANNOUNCEMENTS 🔊

AI Safety Collab 2025 Summer

Comprehensive 8-week introductory program run by ENAIS, split into 2 tracks: governance (following the BlueDot curriculum) and alignment (following the AI Safety Atlas textbook). The course is aimed at people who have an interest in and are relatively new to AI safety, regardless of background.

🗓️ Register by: July 20th

AI Safety x Physics Grand Challenge

Research hackathon from Apart, designed to be an entry point for physicists who want to tackle critical AI safety problems - allowing them to work alongside peers who share their technical background and passion for rigorous problem-solving. There will be a project track and a paper track.

🗓️Register by: July 25th

TOP PICKS 📑 🎧

Why Super Big Data Centers Matter?

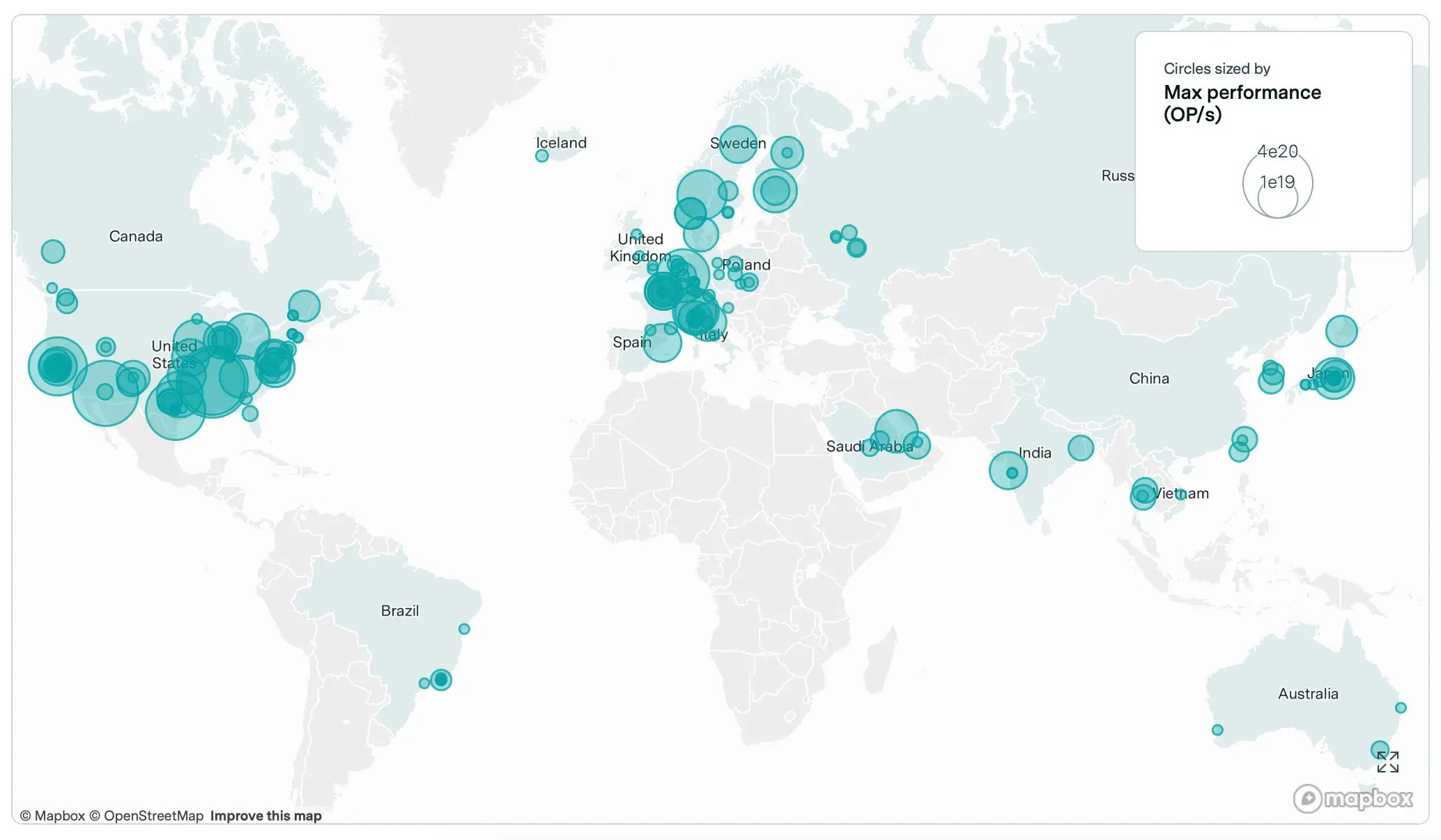

Big AI developers and countries with ambitious AI plans have been investing billions of dollars into very large data centers. Turkey also announced its plans for a big datacenter investment. If AI data centers are becoming one of the most strategic assets, do we know how they are concentrated today?

What Can Nuclear Policy Teach Us About AI Governance? Not Much, According To Two Policy Experts.

As AI leaders increasingly compare their technology to nuclear weapons and call for international governance bodies, two policy experts challenge this framing. Horowitz and Kahn argue that AI’s broad applicability, inability to be controlled like nuclear materials, and continuous value creation make nuclear-style governance inappropriate. They advocate for upgrading existing institutions like the FDA and aviation authorities rather than creating new international frameworks.

NEWS 🗞️

The U.S. Senate Rejected a Moratorium That Would Block State-Level AI Regulations.

- In July 2025, the U.S. Senate overwhelmingly rejected a proposal to impose a moratorium on state-level AI regulations, with a vote of 99 to 1.

- This decision highlights the failure of the federal government’s attempt to establish a unified policy and effectively positions the states as the de facto AI regulators in the United States.

The United States and the United Kingdom Have Redefined “AI Safety” Policy Through a National Security Lens.

- the U.S. rebranded its AI Safety Institute

- Both countries refocused their AI safety agendas to emphasize national security, defense, and economic competition - narrowing the scope of what “AI safety” means.

- This strategic pivot deprioritizes broader societal harms (e.g., bias, discrimination), forming an “Anglosphere bloc” that contrasts with the EU’s rights-based regulatory approach.

A Revolution from Google DeepMind: AlphaGenome Illuminates the “Dark Matter” of the Genome

- In June 2025, Google DeepMind introduced AlphaGenome, a model that can predict the function of the 98% of human DNA that does not code for proteins.

- This breakthrough accelerates the understanding of the causes of genetic diseases, but also brings risks such as the design of AI-powered biological weapons.

- The model’s success shifts the AI safety debate away from the model’s accuracy to the risks of potential misuse of the correct information it generates.

The “ChatGPT Moment” in Robotics: “World Models” That Understand the Physical World Are Being Developed

- “World models,” such as Meta’s V-JEPA 2 and 1X Technologies’ Redwood AI, enable robots to understand physical reality and predict the consequences of their actions.

- This advancement carries AI’s known problems like bias, fragility, and unpredictability from the digital realm into the physical one.

- The development marks a shift from disembodied language models (LLMs) to embodied intelligence (robots) and necessitates a new research field called “Embodied AI Safety.”